AptaRed

Automated adversarial testing across text, audio, video, and image — powered by Goal based red teaming and live threat intelligence.

Washington DC, USA

Protect your AI systems from emerging threats with Apta Sentry, an advanced AI security platform designed to detect vulnerabilities, strengthen model safety, and support secure development workflows.

Automated AI red teaming, adversarial mutation, risk evaluation, and runtime monitoring for production language models. Built for security teams and ML engineers.

Explore the powerful security capabilities of Apta Sentry through our specialized protection modules designed to strengthen AI development, testing, and deployment.

Automated adversarial testing across text, audio, video, and image — powered by Goal based red teaming and live threat intelligence.

Finding a vulnerability doesn't make your model safer. What makes it safer is feeding what you learned back into how it is trained.

Your AI agents act autonomously — but traditional security tools can't see their decisions, tool calls, or data flows. AgentRed continuously stress-tests your agentic workflows with goal-driven adversarial attacks powered by live threat intelligence.

Pre-deployment testing catches what you know to look for. Production surfaces what you didn't. Every prompt. Every response. Evaluated in real time — before harm reaches users.

Supply chain attacks on AI models are documented and growing. Validate every model before it enters your environment. Then keep watching it.

Tools alone do not produce AI security. Without a clear evaluation strategy, calibrated benchmarks. The strategy and expertise that make your entire AptaConsult investment work.

Explore the powerful security capabilities of Apta Sentry through our specialized protection modules designed to strengthen AI development, testing, and deployment.

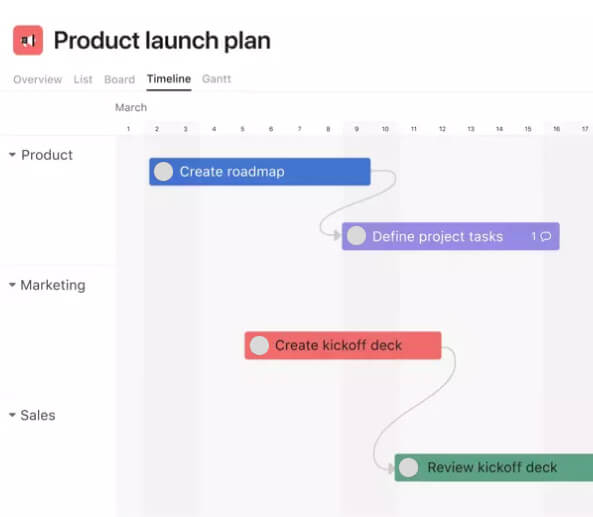

Automated adversarial testing across text, audio, video, and image — powered by live threat intelligence. Manual red teaming — where security engineers attempt to break an AI system by hand — is slow, inconsistent, and fundamentally limited by human imagination and bandwidth. Sentry automates the entire pipeline: from threat discovery to risk scoring to remediation routing, continuously.

Validate every model before it enters your environment. Then keep watching it. Supply chain attacks on AI models are documented and growing. Researchers have confirmed that malicious actors are publishing compromised models on public repositories — platforms where the majority of enterprises now source pre-trained weights (the learned parameters that define a model's behavior). Traditional security scanners cannot detect a trojaned model (one that behaves normally until a specific trigger activates hidden malicious behavior), a malicious pickle file (a Python serialization format that can execute arbitrary code when loaded), or a backdoored component embedded at the weight level.

Your AI agents are acting autonomously. Sentry ensures they stay within bounds. Agentic AI systems — AI that plans tasks, executes multi-step actions, calls external APIs, reads files, browses the web, and collaborates with other agents without continuous human oversight — represent the fastest-growing and least-secured category in enterprise AI. Traditional security tools were built to inspect code. They have no visibility into model decisions, tool calls, or the data flowing through agent workflows.

Every prompt. Every response. Evaluated in real time — before harm reaches users. Pre-deployment testing catches what you know to look for. Production surfaces what you didn't. Real users, adversarial creativity, and the compounding edge cases of scale consistently surface behaviors that no test suite fully anticipates. By the time a policy violation becomes visible in logs, it has already reached your users.

Every finding becomes a fix. Every fix becomes training data. Your AI compounds in quality with every cycle. Finding a vulnerability doesn't make your model safer. What makes it safer is feeding what you learned back into how it is trained. The gap between red team findings and model improvement is where most AI security programs stall — because bridging it requires high-quality training data that most teams cannot generate at speed or scale.

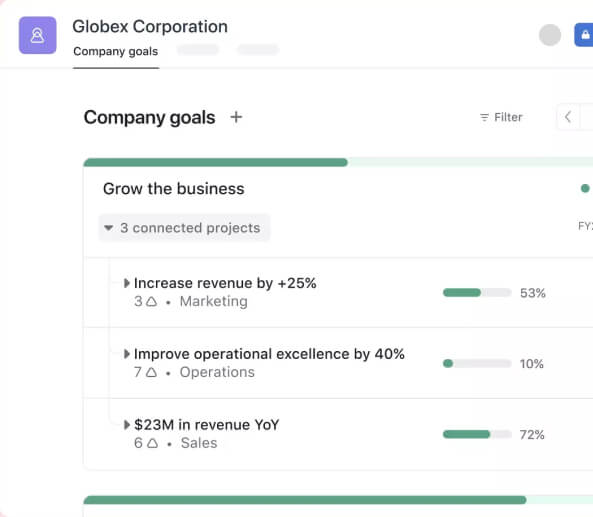

See how Apta Sentry is helping teams build safer AI applications through advanced security testing, vulnerability detection, and intelligent monitoring.

Parallel execution architecture vs sequential approaches. 5–10-minute assessments vs weeks of manual testing.

Reduced exposure to AI Exploits, less potential blast radius from adversarial inputs.

8x Model improvements by generating high-fidelity datasets proven to accelerate the improvements.

Prompts, Powered by a proprietary Seeding Engine with combination of various techniques that are created in real-time.

Have questions about AI security and how it works? Our FAQ section is designed to provide clear and helpful answers about Apta Sentry, its features, integration process, and use cases.

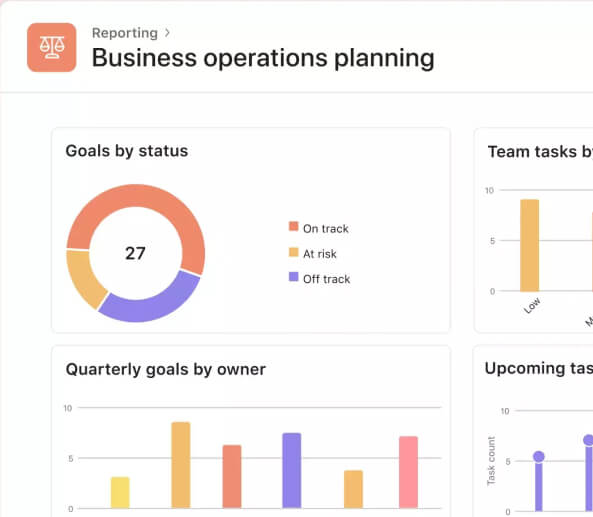

Discover key capabilities of Apta Sentry grouped together for a quick overview. From automated red teaming to real-time monitoring and model risk evaluation, these core features give you a snapshot of how we secure modern AI systems.

Continuously simulate adversarial attacks and prompt injections to uncover vulnerabilities before they reach production. Strengthen your AI models with proactive red teaming and mutation testing.

Manage evaluations, risk scores, and monitoring insights from a single centralized view. Track model performance, detect anomalies, and maintain full visibility across your AI systems.

Monitor live AI applications with ultra-low latency detection. Identify suspicious behavior, prevent data leakage, and ensure consistent model safety without slowing down performance.

OWASP LLM top 10, NIST AI RMF, and ISO 42001 mapped threat prompts. Categorized by industry vertical and compliance framework.

200+ mutation operators: direct injection, indirect injection, roleplay jailbreaks, cross-lingual variants, and scenario escalation.

Multi-turn, multi-modal evaluation with classifier pipelines. Per-attack scoring with confidence intervals. Full audit trail.

Red/blue signal synthesis produces patched system prompts, guardrail configurations, and RLHF training signals for hardening.

Welcome to Zenfy, where digital innovation meets strategic excellence. As a dynamic force in the realm of digital marketing, we are dedicated to propelling businesses into the spotlight of online success.

Our blog section shares expert knowledge, practical guidance, and industry updates to help developers and businesses build and deploy secure AI applications with confidence.